CONSIDERATION 1:

Performance Requirements for Ad-hoc Queries

Druid’s architecture and optimized data format – in contrast to Redshift – enable ad-hoc, sub-second interactive queries at large scale.

Redshift

Redshift implements a caching layer to improve query performance. Even with this, initial query times can take minutes. Repeated queries will run faster once cached, but this does not account for ad-hoc, interactive queries or systems where new data is added constantly.

Redshift does not implement secondary indexes like Druid. Its core architecture is well suited for workloads such as reporting that can rely on predefined queries for performance.

Druid

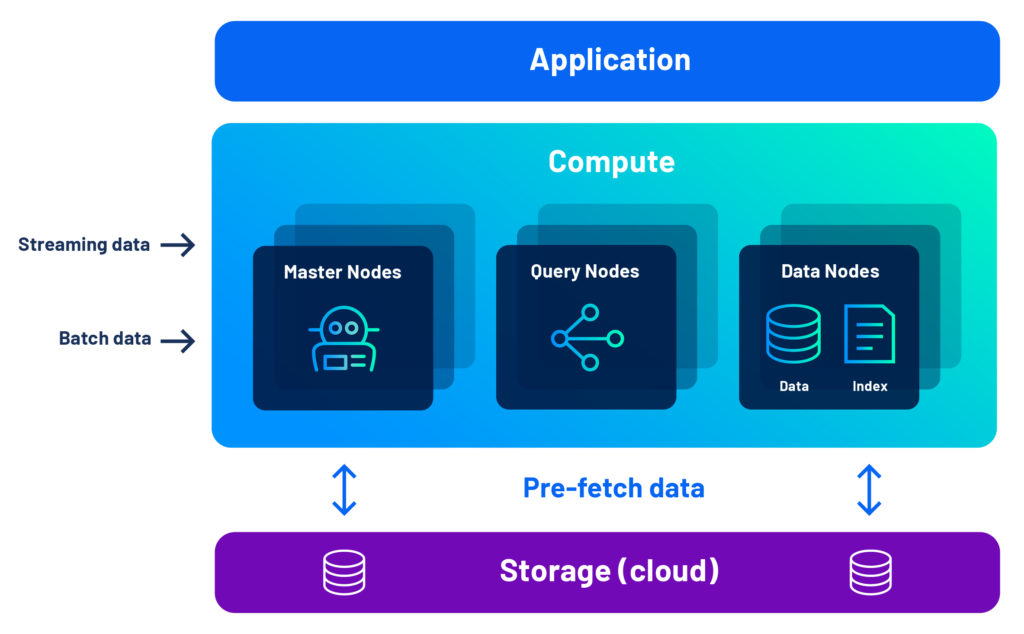

Druid implements a separate storage-compute architecture for flexibility and cost saving measures like data tiering. Crucially, however, Druid’s interactive query engine pre-fetches data to the compute layer, which means that nearly every query will be sub-second, even as data volumes grow since queries never are waiting on a caching algorithm to catch up. With a very efficient storage design that integrates automatic indexing (including inverted indexes to reduce scans) with highly compressed, columnar data, this architecture provides leading price-performance. Learn more here

CONSIDERATION 2:

High Concurrency

Redshift’s design is optimized for infrequent use. High concurrency can become expensive.

Redshift

Redshift’s value proposition is built on a pay-as-you-go model that saves money when your system is not in use. This makes it ideal for relatively infrequent queries with a small number of users, and is why the number of “query slots” is limited to 50. Redshift has a “virtually unlimited” concurrency scaling mode which allows the cluster to grow for more users. While this is fine for short bursts of activity, it cannot economically sustain long-term high concurrency, and there can be delays while these new resources are spun-up. Plus, it does not cover every type of query.

Druid

Druid’s unique architecture handles high concurrency with ease, and it is not unusual for systems to support hundreds and even thousands of concurrent users. Druid is designed to accommodate high queries per second with an efficient use of computing resources. Features including intelligent QoS and tiering guarantee performance prioritization for mixed workloads.

CONSIDERATION 3:

Real-Time Streaming Data Ingestion

Redshift can connect to streaming sources, but real-time performance requires major effort and lock-in to their proprietary systems.

Redshift

Redshift has connectors to streaming data but can only ingest it in batch mode. This makes it unsuitable for real-time or even near real-time analytics. Even with Kinesis Firehose, a service to deliver streams to Redshift, materialized views must be created and maintained by administrators and special care must be taken to avoid duplicate records. Kinesis Firehose also does not address Kafka, a more widely used streaming technology.

Druid

With native support for both Kafka and Kinesis, you do not need a connector to install and maintain in order to ingest real-time data. Druid can query streaming data the moment it arrives at the cluster, even millions of events per second. There’s no need to wait as it makes its way to storage. Further, because Druid ingests streaming data in an event-by-event manner, it automatically ensures exactly-once ingestion. Learn more here

CONSIDERATION 4:

Scalability

Redshift’s architecture is not designed to scale out, which may become problematic for rapidly growing applications.

Redshift

Redshift was not designed with separate storage and compute. Redshift subsequently offered an option to scale compute separately from storage. Even with this option, however, scaling out or even up is not easy to do. There are only limited options for scaling, requiring “just a few hours” at best. To get this time down to “minutes” requires an add-on service at additional cost. Also, without support for secondary indexes, customers must depend on “brute force” to get interactivity and high concurrency. Yet with limited options, even this strategy will be a challenge for administrators to do quickly and cost effectively.

Druid

Druid is a services-oriented architecture with independent, loosely-coupled services for ingestion, query, and orchestration. A Druid cluster has 3 major node types, each of them independently scalable:

- Data nodes for ingestion and storage

- Query nodes for processing and distribution

- Master nodes for cluster health and load balancing

This gives administrators fine-grained control and allows innovative, cost-saving tiering to put less important or older data on cheaper systems. Further, there is no limit to how many nodes you can have, with some Druid applications using thousands of nodes. Learn more about Druid’s scalability and non-stop reliability.

CONSIDERATION 5:

Deployment Options

Druid provides a more open deployment model across open source, hybrid managed, and fully-managed DBaaS options.

Redshift

For many companies, a proprietary, fully-managed cloud is a good choice. But this can be problematic for some regulated industries that require more control.

Druid

Druid is open source, so you are not locked-in to a particular vendor. Imply offers flexible cloud deployments for Druid, including a fully-managed DBaaS with Imply Polaris. Imply’s Enterprise Hybrid is co-managed by you and Imply on your cloud, with you in control. You determine when updates happen, giving you time to fully test your application. Additionally, Imply’s Enterprise solution is ready for organizations that still need to deploy and completely control their own systems.

Hear From a Customer

Learn why Twitch and TrafficGuard switched from Redshift to Druid for their analytics apps.

Druid’s Architecture Advantage

With Druid, you get the performance advantage of a shared-nothing cluster, combined with the flexibility of separate compute and storage, thanks to our unique combination of pre-fetch, data segments, and multi-level indexing.

Developers love Druid because it gives their analytics applications the interactivity, concurrency, and resilience they need.

In a world full of databases, learn how Apache Druid makes real-time analytics apps a reality. Read our latest whitepaper on Druid Architecture & Concepts

Leading companies leveraging Apache Druid and Imply

“By using Apache Druid and Imply, we can ingest multiple events straight from Kafka and our data lake, ensuring advertisers have the information they need for successful campaigns in real-time.”

Cisco ThousandEyes

“To build our industry-leading solutions, we leverage the most advanced technologies, including Imply and Druid, which provides an interactive, highly scalable, and real-time analytics engine, helping us create differentiated offerings.”

GameAnalytics

“We wanted to build a customer-facing analytics application that combined the performance of pre-computed queries with the ability to issue arbitrary ad-hoc queries without restrictions. We selected Imply and Druid as the engine for our analytics application, as they are built from the ground up for interactive analytics at scale.”

Sift

“Imply and Druid offer a unique set of benefits to Sift as the analytics engine behind Watchtower, our automated monitoring tool. Imply provides us with real-time data ingestion, the ability to aggregate data by a variety of dimensions from thousands of servers, and the capacity to query across a moving time window with on-demand analysis and visualization.”

Strivr

“We chose Imply and Druid as our analytics database due to its scalable and cost-effective analytics capabilities, as well as its flexibility to analyze data across multiple dimensions. It is key to powering the analytics engine behind our interactive, customer-facing dashboards surfacing insights derived over telemetry data from immersive experiences.”

Plaid

“Four things are crucial for observability analytics; interactive queries, scale, real-time ingest, and price/performance. That is why we chose Imply and Druid.”

© 2023 Imply. All rights reserved. Imply and the Imply logo, are trademarks of Imply Data, Inc. in the U.S. and/or other countries. Apache Druid, Druid and the Druid logo are either registered trademarks or trademarks of the Apache Software Foundation in the USA and/or other countries. All other marks and logos are the property of their respective owners.