Streamlining Time Series Analysis with Imply Polaris

We are excited to share the latest enhancements in Imply Polaris, introducing time series analysis to revolutionize your analytics capabilities across vast amounts of data in real time.

Learn MoreLike many in the developer community, I have tried ChatGPT from OpenAI. Over my IT career, I have worked in many positions including as a data scientist/data engineer. So, as I did my assessment of ChatGPT, I thought of ways to use the technology in practice. I have done sentiment analysis before using custom algorithms that I wrote, specific NLP (Natural Language Processing) libraries, and low-code platforms like Weka, RapidMiner, and DataRobot. Why not do something similar using ChatGPT and combine it with a real-time analytics database like Apache Druid?

There are many benefits to combining a trained, NLP model with Apache Druid for sentiment analysis. Modern models such as GPT-3 and GPT-4 are highly effective in understanding and processing natural language. They can better identify nuances and context, resulting in more accurate results. Sentiment analysis often requires processing large volumes of data, such as social media posts, reviews, or customer feedback. And then, aggregating and analyzing those sentiments at scale can reveal even more insights and identify patterns and trends – all crucial for businesses that need to react quickly to changes in customer sentiment.

As this blog will show, the integration is relatively easy using technologies that are publicly accessible.

ChatGPT from OpenAI is a hot, trending topic these days. It is defined as a deep learning-based language model trained using a large corpus of text data. It uses unsupervised learning, contextual understanding, and probabilistic modeling techniques to generate human-like responses to natural language inputs. Developers can integrate ChatGPT into their applications to provide functionality like language translation, summarization, sentiment analysis, conversation generation, etc. to users.

Apache Druid is an open-source, high-performance, analytics database designed for real-time data analysis. Druid is designed to efficiently handle terabytes and petabytes of batch and streaming data while supporting thousands of concurrent users with low latency and high throughput that enable sub-second queries. Druid is designed to scale horizontally by adding more nodes to the cluster as the data size grows. It can store and query both historical and real-time data and offers flexible data ingestion options, allowing users to import data from a variety of sources, including Kafka, Kinesis, and hundreds of databases. It also supports advanced analytics features such as theta sketches (approximate distinct counting based on the Apache Data Sketches library), time series forecasting, and anomaly detection. Developers use Druid to build custom applications that require fast, real-time querying of large data sets.

Developers use Twitter APIs (Application Programming Interface) to access Twitter’s data and functionality programmatically. Twitter APIs provide a range of endpoints for accessing different types of data, including tweets, users, and trends. You can use the APIs to create custom applications that interact with Twitter’s platform, such as social media monitoring tools, sentiment analysis tools, and chatbots that operate in real-time.

Druid is designed to handle large volumes of data and can scale horizontally, as needed. Although it is purpose-built for streaming data, it can also ingest batch data, as I will describe later. In production environments, Druid is optimized to handle sub-second queries at scale, with high concurrency, low latency, and high throughput which results in lower cost with higher user satisfaction.

By using an AI tool like ChatGPT with Druid, you can perform sentiment analysis on massive datasets without compromising on query performance or accuracy. You could run ad-hoc aggregations and filters across different topics, populations, geographies, time ranges or 100s of other dimensions. Want to analyze your brand’s reputation by age group? Or want to see what percentage of your followers are sending positive or negative sentiments at any period of time? ChatGPT and Druid could empower businesses to make quick, data-driven decisions and respond to customer feedback or market trends in real-time. Druid makes visualization really easy too by seamlessly integrating with a variety of data visualization tools, including Apache Superset, Tableau, Power BI, Looker, QlickView, and Grafana.

Leveraging the strengths of both technologies, you can create robust solutions to tackle a range of AI analytical use cases, including:

For this project, I will capture tweets using the Twitter API, determine the sentiments of the tweets using a ChatGPT model, save the tweets in Druid segments and then produce a chart to summarize the overall sentiments.

The following prerequisites are required to execute this project.

The Twitter API allows developers to programmatically access Twitter data and functionality, such as searching for tweets, posting tweets, or retrieving user data. Follow similar steps below to obtain Twitter API credentials:

OpenAI provides access to its language models, including GPT (Generative Pre-trained Transformer), through the OpenAI API. To use the API, you need to create an account with OpenAI and obtain an API key. This API key is a unique identifier that allows you to access the OpenAI API and use the language models in your applications. Follow the general steps below to obtain an OpenAI key:

If Druid is not installed, please refer to my previous blog for local installation instructions.

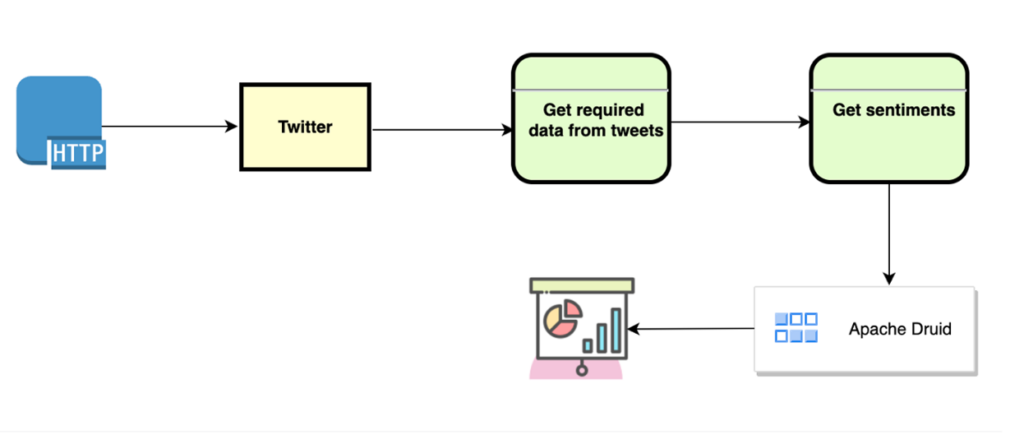

Below is a data flow diagram (DFD) of how data flows through this system:

In a nutshell, the process starts by fetching Twitter data containing text “ChatGPT”. The data is then passed through sentiment analysis using ChatGPT to enhance it. The enhanced data is saved to Apache Druid. The program then connects to Druid and retrieves the enhanced Twitter data. The program counts the number of occurrences of each value in the column, stores the counts in a variable, and creates a pie chart using the aggregation. Finally, the pie chart is shown, and the process ends.

The data pipeline can be segmented into four specific actions. Each step is explained in greater detail below.

Once you have secured the required Twitter credentials (see above), the next step is to connect to the Twitter API and retrieve tweets. This can be done in Python by using the tweepy library. The connection code should be similar to this with your specific authentication secrets and keys:

def get_tweepy_api():

consumer_key = "xxxxx"

consumer_secret = "xxxxx"

access_key = "xxxxx"

access_secret = "xxxxx"

auth = tweepy.OAuthHandler(consumer_key, consumer_secret)

auth.set_access_token(access_key, access_secret)

api = tweepy.API(auth)

return apidef get_tweets(api, containing_text, total_number_of_tweets, language):

tweets = api.search_tweets(q=containing_text, lang=language, count=total_number_of_tweets)

return tweetsAfter executing the authentication and search actions you will have the number of tweets specified in a tweets object. From the tweets I pull out the following fields:

At this point it’s time for step two.

Now it’s time to enhance the Twitter data with the NLP sentiment analysis from ChatGPT. After obtaining the ChatGPT key (see above), it’s possible to access the models via the OpenAI API. We will be using the text-davinci-003 model. It is an advanced natural language processing model developed by OpenAI. It is capable of generating human-like text responses and is trained on a large corpus of text data using deep neural networks, enabling it to understand context and generate responses in a natural, conversational way.

To use the model first import OpenAI. Then questions can be asked and responses returned using the code sample below:

def question_davinci_model(prompt):

openai.api_key = API_KEY

response = openai.Completion.create(

model="text-davinci-003",

prompt=prompt,

temperature=0.7,

max_tokens=256,

top_p=1,

frequency_penalty=0,

presence_penalty=0

)

return responseI use the OpenAI API to ask questions of each tweet and then save the NLP responses. The table below gives sample questions I use to gather the AI generated sentiment information to make the tweet data more meaningful.

ChaptGPT Questions

| Data Requested | Sample question |

| Sentiment | What is the sentiment of this statement? |

| Ranking | On a scale of 1 to 10, how positive or negative is this tweet? |

| Opinion | What is your opinion of this statement? |

| Profile | What is the profile of the person who would write this tweet? |

Now that we have the tweets and their sentiment information. It’s time to store that data.

There are several ways to ingest data into Druid. I used a Python script to execute a command line instruction (see below).

def upload_data():

execute_cmd = 'bin/post-index-task --file /Users/rick/IdeaProjects/twitter_chatgpt_druid/insert_config.json --url http://localhost:8081'

os.chdir('/Users/rick/Desktop/stuff/druid/apache-druid-25.0.0')

return_message = os.system(execute_cmd)

print(return_message)This code uses the Python os library to execute the load instructions to load the data in the specified file and create indexes using a ‘bin/post-index-task’ utility that ships with Druid and the configuration file that I specified. Below is an example of the insert_config.json that I used.

{

"type": "index_parallel",

"spec": {

"ioConfig": {

"type": "index_parallel",

"inputSource": {

"type": "local",

"baseDir": "/Users/rick/IdeaProjects/twitter_chatgpt_druid/data",

"filter": "*.csv"

},

"inputFormat": {

"type": "csv",

"findColumnsFromHeader": true

}

},

"tuningConfig": {

"type": "index_parallel",

"partitionsSpec": {

"type": "dynamic"

}

},

"dataSchema": {

"dataSource": "tweets_sentiments_data",

"timestampSpec": {

"column": "tweet_created_at",

"format": "auto"

},

"dimensionsSpec": {

"dimensions": [

"tweet_number",

"tweet_id",

"tweet_text",

"tweet_is_retweeted",

"ai_sentiment",

{

"type": "long",

"name": "ai_ranking"

},

"ai_opinion",

"ai_profile"

]

},

"granularitySpec": {

"queryGranularity": "none",

"rollup": false,

"segmentGranularity": "day"

}

}

}

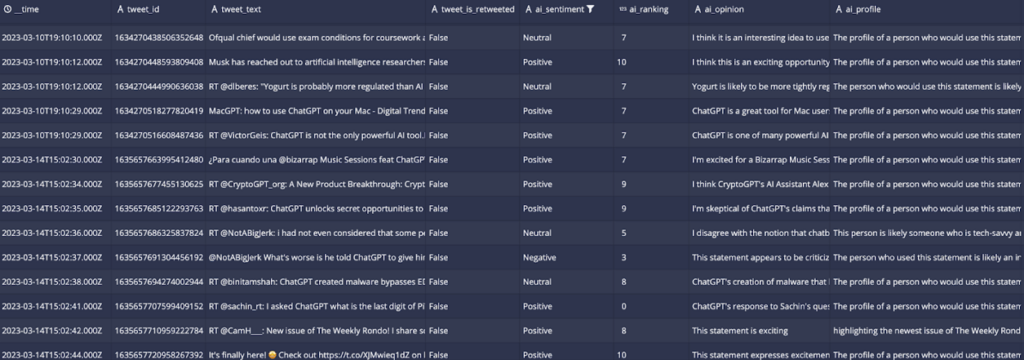

}In my analysis, I examined the tone of each tweet collected to gauge how users felt about the ChatGPT AI platform. This involved assessing whether the tweets conveyed positive, negative, or neutral opinions as determined by the AI model. I could have chosen any other topic and replaced the text filter used to retrieve the tweets (see code snippet below).

if __name__ == "__main__":

containing_text = "chatgpt"

total_number_of_tweets = 100

language = "en"

api = get_tweepy_api()

get_tweets_containing_text(api, containing_text, total_number_of_tweets, language)Here is an example of what the data looks like in the Druid UI:

Now it’s time to visualize the results.

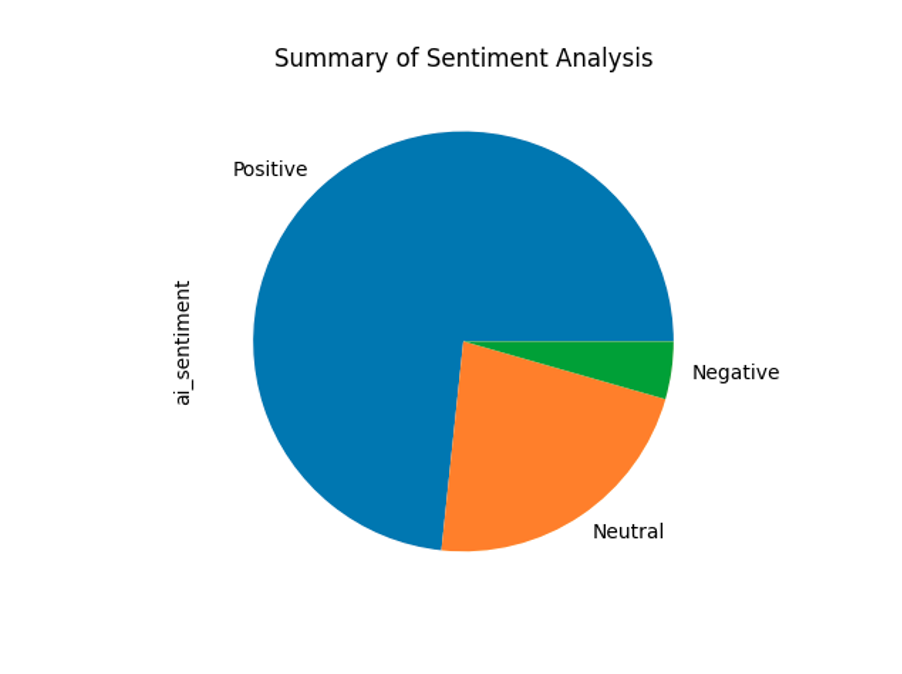

To plot the data, I first connect to Druid using a library called pydruid with specific connection details for the Druid database, such as the host, port, path, and scheme. I then execute a SQL SELECT query to get the data. I load the results into a pandas DataFrame and count the number of occurrences of each value in the DataFrame column and store the results in a variable. Finally, I generate a pie chart using the counts data and displays it using the ‘plt.show()’ function from the matplotlib library. The resulting chart shows the proportion of each value in the ‘ai_sentiment’ column. Here is the sample code:

# Druid connection details

druid_host = "localhost"

druid_port = 8888

druid_path = "/druid/v2/sql"

druid_scheme = "http"

# Query to retrieve data from Druid

druid_query = "SELECT ai_sentiment FROM tweets_sentiments_data WHERE ai_sentiment IS NOT NULL"

# Connect to Druid and execute query

druid_connection = connect(host=druid_host, port=druid_port, path=druid_path, scheme=druid_scheme)

druid_cursor = druid_connection.cursor()

results = druid_cursor.execute(druid_query)

# Convert query results to a Pandas DataFrame

df = pd.DataFrame(druid_cursor.fetchall(), columns=[desc[0] for desc in druid_cursor.description])

# Count the number of occurrences of each value in the column

counts = df['ai_sentiment'].value_counts()

# Plot the counts as a pie chart

counts.plot(kind='pie')

# Add a title to the chart

plt.title('Summary of Sentiment Analysis')

# Show the chart

plt.show()The resulting pie chart shows that the vast majority of the tweets about ‘ChatGPT’ are positive based on the NLP analysis of the OpenAI, ChatGPT text-davinci-003 model. For the tweets I analyzed, the sentiment was overwhelmingly positive. I suspect that will be the general tone of the ChatGPT-themed tweets for the near future. In that case, the graph will look similar to the one my data produced below:

I also executed a few queries from the Druid UI. For example, I was curious about sentiments that were highly positive. To get that information I used the following query:

SELECT ai_sentiment, tweet_text, ai_opinion, ai_profile

FROM tweets_sentiments_data

WHERE ai_sentiment = 'Positive' and ai_ranking = 10I chose one of the resulting records:

The tweet was:

Musk has reached out to artificial intelligence researchers in recent weeks to set up a new research lab to develop…

The opinion from AI was:

I think this is an exciting opportunity for AI researchers to further their work and potentially revolutionize the industry.

Here is one interesting result. Take a look at the profile of the person the text-davinci-003 model says would write the tweet:

The profile of a person who would use this statement is likely someone who is interested in technology and artificial intelligence.

It appears that the ChatGPT model is very impressed by the potential of AI.

To simplify this project, I only utilize a set number of tweets. But for a production sentiment analysis application, the tweets could be streamed to a messaging service like Kafka or Kinesis. The tweets could then be analyzed and the data enhanced using one of many sentiment analysis libraries such as:

In this blog, I showed how to address a use case where sentiment analysis is required for a specific data source. The same approach can be taken when dealing with other sources. Get the data, enhance the data with an AI model, save the data, and run analytics. Using Druid as the data store, these use cases can be addressed at scale using code for batch uploads or in real-time when sub-second analysis is required by thousands of concurrent users analyzing trillions of rows of data. The importance of Druid in this scenario is its ability to support fast analytical queries at scale.

A real-time environment is where Druid truly shines. It can connect to Kafka and Kinesis natively, so there is no need for a connector library or specific language-based SDK. Users can capture and augment data using various AI technologies as it is loaded into Druid then the data can be and then analyzed and visualized.

The fact is, AI systems are becoming a part of everyday life. The key is to ensure that these machines are aligned with human intentions and values. Please feel free to use the sample code included to create your own solutions and stay tuned for my upcoming articles.

Rick Jacobs is a Senior Technical Product Marketing Manager at Imply. His varied background includes experience at IBM, Cloudera, and Couchbase. He has over 20 years of technology experience garnered from serving in development, consulting, data science, sales engineering, and other roles. He holds several academic degrees including an MS in Computational Science from George Mason University. When not working on technology, Rick is trying to learn Spanish and pursuing his dream of becoming a beach bum.

Streamlining Time Series Analysis with Imply Polaris

We are excited to share the latest enhancements in Imply Polaris, introducing time series analysis to revolutionize your analytics capabilities across vast amounts of data in real time.

Learn MoreUsing Upserts in Imply Polaris

Transform your data management with upserts in Imply Polaris! Ensure data consistency and supercharge efficiency by seamlessly combining insert and update operations into one powerful action. Discover how Polaris’s...

Learn MoreMake Imply Polaris the New Home for your Rockset Data

Rockset is deprecating its services—so where should you go? Try Imply Polaris, the database built for speed, scale, and streaming data.

Learn More